brain of mat kelcey...

e10.6 community detection for my twitter network

April 04, 2010 at 12:58 PM | categories: Uncategorizedlast night i applied my network decomposition algorithm to a graph of some of the people near me in twitter.

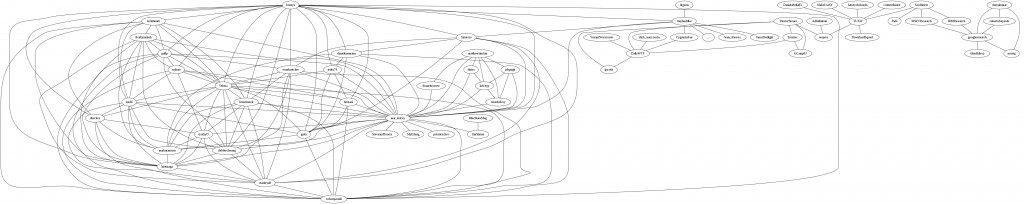

first i build a friend graph for 100 people 'around' me (taken from a crawl i did last year). by 'friend' i mean that if alice follows bob then bob also follows alice.

here the graph, some things to note though; it was an unfinished crawl (can a crawl of twitter EVER be finished) and was done october last year so is a bit out of date.

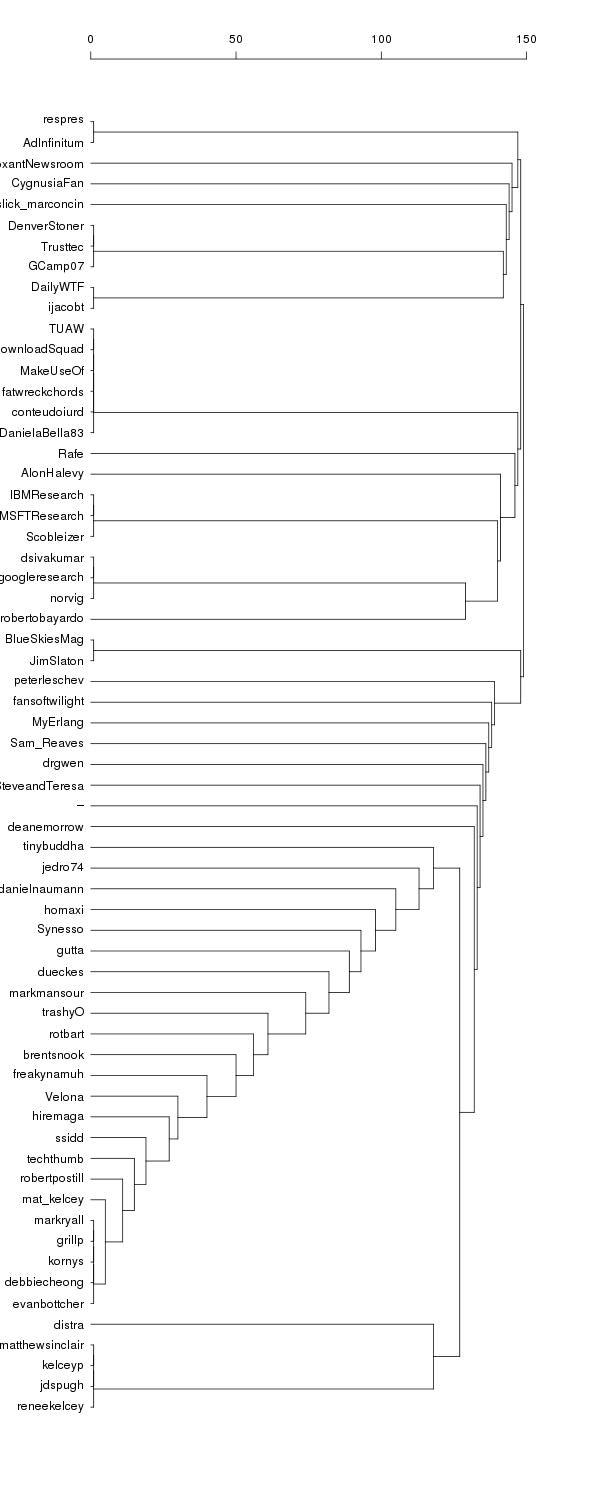

and here is the dendrogram decomposition

some interesting clusterings come out..

some interesting clusterings come out..

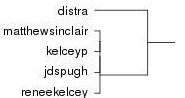

right at the bottom we have a small clique (ie everyone following everyone else) of people i've known from when i was in sydney

this small group connects to the group i'm in; tinybuddha down to evanbottcher; which roughly describes the group of people i've met in melbourne.

the order of the single breakaways in the melbourne group is pretty arbitrary. i get quite different ordering if i run the decomposition multiple times due to the random tie breaking involved. i could either run the decomposition multiple times and work out some kind of averaging or choose another more granular way of deciding how to break ties.

the next connector after syndey and melbourne are unified is deanemorrow a coworker when i was at distra. this one sticks out for me as being the biggest flaw in the clustering since it would have made more sense to have him placed near distra at the bottom.

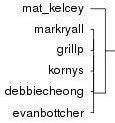

another interesting clique is near me..

it has four thoughtworkers; mark, gill, debs and evan and one sensiser; korny. did korny perhaps work for thoughtworks in a previous life ;)

it has four thoughtworkers; mark, gill, debs and evan and one sensiser; korny. did korny perhaps work for thoughtworks in a previous life ;)

another interesting note is there exists a path from me to peter norvig (who is too busy for twitter it seems) but only because of the huge connector nodes that exist in twitter. an example in this case is TUAW who follow 30,000+ people and have even more followers. these nodes cause a bit of noise in the system since they are slightly false representations of what a 'friend' means in my mind. not sure how to take these numbers into account...

things to do...

- the biggest oversimplification in this system is how i break ties for deciding which edge to cut out next if multiple exist with the same betweenness. currently it chooses the one that would make the most even sized break (based on smallest standard deviation of the connected components). though this is good for breaking a group into even sizes it's bad since it favours breaking a single element off a large group. this is what has caused the 'laddering' we see in the melbourne group.

- the shortest path algorithm used to calculate edge betweenness is stochastic and if multiple shortest paths exist only one of them is chosen. it'd be better if all were considered with a weighting scheme.

- it might be better to consider vertex betweenness instead of edge betweenness since one person could exist in multiple groups. if i started down this path though i think i'd rather just rewrite the lot using something like the clique percolation method