brain of mat kelcey...

the map interpretation of attention

August 19, 2020 at 10:30 AM | categories: talk, three_strikes_rule

a talk i did at melbourne ml/ai on how attention mechanism can be interpretated as a form of differentiable map. check out the recording!

measuring baseline random performance for an N way classifier

April 11, 2020 at 12:34 PM | categories: short_tute, three_strikes_rule

quick example code of a simple way to gauge baseline random performance.

deriving class_weights from validation data

March 03, 2020 at 06:00 PM | categories: short_tute, three_strikes_rule

quick example code demoing a way to derive class_weights from performance on validation data. this can often speed training up.

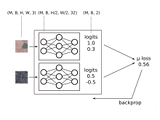

initing the biases in a classifer to closer match training data

February 27, 2020 at 12:00 PM | categories: short_tute, three_strikes_rule

some code showing how you can init the bias of classifier to match the base distribution of your training data.

popular posts...

FPGA wavenets : eurorack audio processing neural nets running at ~200,000 inferences/sec (oct 2023)

dithernet very slow movie player : a GAN that slowly plays a movie over a year on an eink screen (oct 2020)

evolved channel selection : neural networks robust to any subset of input channels, at any resolution (mar 2021)

ensemble nets : training ensembles as a single model using jax on a tpu pod slice (sept 2020)

bnn : counting bees with a rasp pi (may 2018)

drivebot : learning to do laps with reinforcement learning and neural nets (feb 2016)

wikipedia philosophy : do all first links on wikipedia lead to philosophy? (aug 2011)

some papers from my time at google research / brain...

- Natural Questions: a Benchmark for Question Answering Research

- Using Simulation and Domain Adaptation to Improve Efficiency of Deep Robotic Grasping

- WikiReading: A Novel Large-scale Language Understanding Task over Wikipedia

my honours thesis

the co-evolution of cooperative behaviour (1997) evolving neural nets with genetic algorithms for communication problems.

old projects...

- latent semantic analysis via the singular value decomposition (for dummies)

- semi supervised naive bayes

- statistical synonyms

- round the world tweets

- decomposing social graphs on twitter

- do it yourself statistically improbable phrases

- should i burn it?

- the median of a trillion numbers

- deduping with resemblance metrics

- simple supervised learning / should i read it?

- audioscrobbler experiments

- chaoscope experiment